MCP Gateways Aren't Enough: AI Agents Need Identity, Authorization, and Proof

MCP gateways solve routing. They don't solve agent identity, authorization, or proof. Here's what enterprise AI agents actually need for the zero trust security that is required to trust agents with your data.

Also available in

中文 (Chinese) · Español (Spanish) · Português (Portuguese) · Français (French) · Deutsch (German) · 日本語 (Japanese) · Nederlands (Dutch) · Ελληνικά (Greek)

Mark Fussell

CEO & Co-Founder

Josh van Leeuwen

Software Engineer

MCP gateways are everywhere (and that's good)

The Model Context Protocol (MCP) has rapidly become the default way agents reach the outside world: the common protocol for connecting an agent to databases, SaaS platforms, code runners, payment systems, and internal services. If your agents do anything useful, they almost certainly do it through MCP.

Naturally, MCP gateways are proliferating. Every infrastructure vendor, every service mesh company, and a growing number of open-source projects and start-ups are shipping MCP gateway solutions. And they provide real value: centralized routing, service discovery, credential management, and basic access control for MCP server connections.

These are table stakes. They are not the finish line for security.

If your enterprise is moving AI agents from prototypes to production, where they call APIs, execute code, query sensitive data, and interact with other agents on behalf of your business, routing and access control are necessary but nowhere near sufficient. The hard security problems are identity, authorization, and proof. And MCP gateways don't solve any of them.

The Three Gaps MCP Gateways Leave Open

Enterprise AI agent deployments must answer three questions before they can go to production. MCP gateways leave all three unanswered.

Gap 1: Identity. "Who Is This Agent?"

MCP gateways authenticate at the connection level. A request arrives with a valid API key or OAuth token, the gateway checks it against a list, and the request passes through. This tells you that the credential is valid. It does not tell you who is calling.

In practice, most AI agents operate with hardcoded API keys or shared service account tokens. When Agent A and Agent B both use the same service credential to reach an MCP server, the gateway sees two identical callers. There is no mechanism for the agent to cryptographically prove its identity, no certificate-backed assertion that a downstream service can independently verify.

This is a problem that the microservices world solved years ago with SPIFFE and mTLS. Every service gets a verifiable, cryptographic identity. When Service A calls Service B, the receiving service knows exactly who is calling and can prove it. AI agents written with frameworks today have none of this. They operate in an identity vacuum, and MCP gateways do nothing to change that.

The difference in practice is stark. Today, most agent calls arrive at a tool server looking like this:

Authorization: Bearer eyJhbGciOiJSUzI1NiJ9...

A token. Possibly shared across multiple agents. Possibly long-lived. With no information about which workload produced it, what context it was operating in, or whether it has been rotated since it was last issued. The tool server accepts it or rejects it. That is the entire identity story.

With SPIFFE-based workload identity, the same call arrives with a certificate-backed SVID, a cryptographically signed assertion that looks like this:

spiffe://diagrid.io/ns/payments/fraud-detection-agent

That URI is not a secret. It doesn't need to be. It is a verifiable claim issued by the platform, tied to the specific workload running in a specific namespace in a specific trust domain, automatically rotated, and provable by any service that trusts the same trust domain. The receiving tool server doesn't just check "is this credential on my allowlist?" It verifies: which workload is this, where is it running, and is it the workload that's supposed to be making this call?

The first model secures the credential. The second model secures the identity. For AI agents operating autonomously across services, databases, and payment systems, that distinction is the difference between access control and zero trust.

For a standards-track perspective, the use of SPIFFE for workload identity is under active discussion in the IETF WIMSE working group and the AAIF Identity & Trust working group.

For a deeper dive into identity read Agent Identity: The Foundational Layer that AI Is Still Missing.

Gap 2: Authorization Beyond Routing. "What Is This Agent Allowed to Do?"

Consider an agent with access to a customer database MCP server. The gateway permits the connection, the agent has a valid credential and the routing rule allows it. The agent is mid-workflow when the LLM, reasoning over an ambiguous instruction, constructs a call that deletes records matching a broad filter. Nothing in the gateway stops it. The routing rule said the agent could reach the server. It had nothing to say about what the agent could do once it got there.

This is the authorization gap MCP gateways leave open. They control access; which callers can reach which servers. They don't control behavior; which actions a specific agent identity is permitted to take, in which workflow contexts, under which conditions. For deterministic software, that distinction barely matters; you can reason about the call graph in advance. For AI agents, whose behavior is emergent and non-deterministic by design, it's the difference between a security model and an illusion of one.

What production requires is deny-by-default authorization at the workflow level: declarative policies specifying exactly which agent identities can invoke which workflows and tools, enforced at the platform layer regardless of what the LLM decides to do. These policies need to live in infrastructure configuration, owned by platform and security teams, version-controlled, and auditable. Not in application code where they can be skipped, overridden, or simply forgotten.

Gap 3: Proof. "What Has This Agent Done, and Can We Prove It?"

MCP gateways produce logs. Logs are useful. Logs are not proof.

Enterprise logging infrastructure has improved significantly. Modern SIEM platforms can make log pipelines tamper-resistant, and immutable audit trails are achievable with the right tooling. But even a perfectly preserved log answers the wrong question. When a compliance team asks "can you demonstrate that a human approved this tool call before the agent executed it?", a log entry that says tool: transfer_funds, status: approved, user: alice is not an answer. They provide no cryptographic guarantee that the recorded events actually occurred in the recorded sequence.

In multi-agent systems, this problem compounds. When Agent C invokes a destructive tool call, was it authorized by Agent B, which was authorized by Agent A, which was triggered by a human? Without a signed chain of custody that propagates across every agent boundary, there is no way to reconstruct or verify the decision chain.

A signed chain entry is not a log record. It is a structured, cryptographically verifiable object that travels with the workflow. A simplified entry looks like this:

{

"step": "fraud_check_passed",

"agent": "spiffe://diagrid.io/ns/payments/sa/fraud-detection-agent",

"timestamp": "2025-04-18T14:23:11Z",

"result": "approved",

"signature": "eyJhbGciOiJFUzI1NiJ9..."

}Every entry is signed by the identity that produced it. When the chain reaches a downstream tool server, it carries the full history: who initiated the workflow, which validation steps ran, whether a human approved, and which agent produced each entry. The tool server verifies every signature before it acts. An entry that cannot be verified because the signing identity is unknown, the signature doesn't match, or the expected step is absent causes the call to be refused before execution.

The EU AI Act, emerging SEC guidance, and evolving SOC2 requirements all converge on a clear expectation: enterprises must demonstrate verifiable control over and accountability for their AI systems.

What enterprises need is a tamper-evident, cryptographically signed record of every step in every agent workflow, not a log someone reviews after the fact, but a proof that downstream services verify in real time before they act. A tool server should be able to refuse a destructive tool call unless the signed history proves that a human approved it, a validation step passed, and the calling agent is authorized. MCP gateways have no concept of this.

What AI-Ready Infrastructure Looks Like

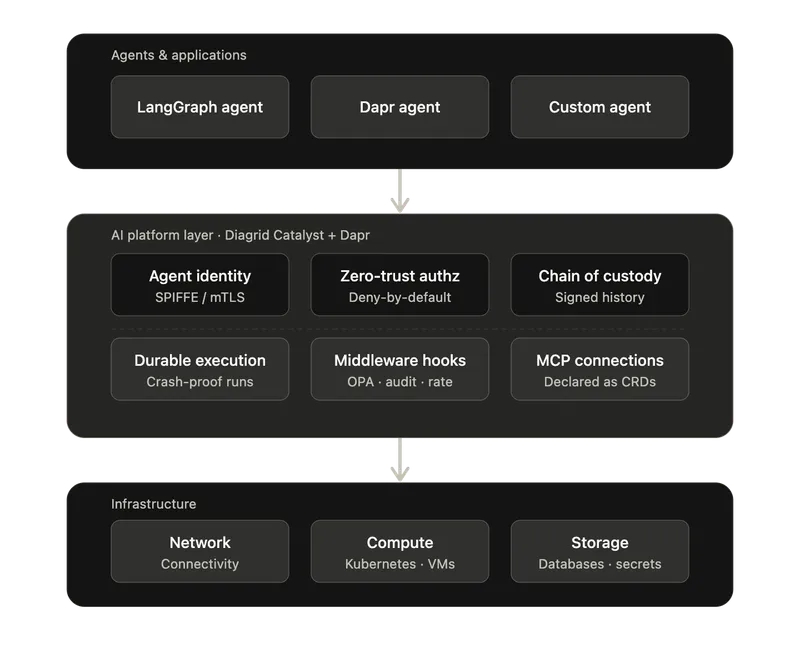

Closing the three gaps requires three capabilities that operate at the AI platform layer, below the agent or application code and above the network/compute.

Cryptographic Agent Identity

Every agent needs a SPIFFE-based cryptographic identity, a certificate issued and rotated automatically by the platform. When an agent calls a tool or another agent, the receiving service verifies identity via standard mTLS. This is not a token in a header. It is a certificate-backed assertion rooted in a trust chain that any participant can independently verify. The same battle-tested approach that secures microservice-to-microservice communication, extended to the unique challenges of non-deterministic, autonomous agents.

Zero-Trust Workflow Authorization

Identity without authorization is meaningless. Platform and security teams need declarative, GitOps-friendly policies that define which agent identities can invoke which workflows and tools. The default posture must be deny-all: anything not explicitly permitted is blocked. These policies must be enforceable at the platform layer.

This is the difference between "this agent can reach this server" (what gateways do) and "this specific agent identity can invoke this specific workflow on this specific target, and nothing else" (what production requires).

Policy-as-Code Over Signed History

Coarse identity-to-workflow rules are necessary but not sufficient. The truly interesting authorization questions are contextual: was this tool call preceded by a fraud-detection step? Did a human approve it within the last 30 seconds? Is the agent making this call the same one that received the original user request, or has the chain been hijacked?

These questions cannot be answered from the request alone. They require reasoning over the history of the workflow that led up to the call. With a cryptographically signed execution history propagated across every step, an Open Policy Agent (OPA) style policy engine that can evaluate Rego (or any other policy language) against the entire chain at the moment of decision:

allow_tool_call {

input.history[_].step == "human_approval"

input.history[_].step == "fraud_check_passed"

input.history[_].agent == input.calling_agent

}

The policy runs at the receiver, not the caller. Agents cannot fabricate history because every entry is signed by the identity that produced it. This turns the workflow history itself into a verifiable input to authorization, the same way OPA already evaluates JWT claims and request context for HTTP APIs, but now extended end-to-end across multi-agent, multi-tool execution chains.

Cryptographic Chain of Custody

When agents collaborate across service boundaries, every step must be signed. Each service signs the accumulated execution history with its own identity certificate, creating a tamper-evident chain. A downstream tool doesn't just check "does this caller have permission?". It verifies the entire history: who initiated the workflow, what approvals occurred, what validation steps passed, and whether every identity in the chain is authorized.

This is not a log someone checks later. It is a cryptographic proof verified before every tool call. It enables enforcement patterns that are impossible with routing alone: a payment MCP tool that refuses to execute unless the signed history proves a fraud-detection workflow passed and a human approved the transaction.

How This Works in Practice

These are not theoretical requirements. Diagrid Catalyst, the managed platform for running Dapr-based applications and AI agents in production, provides all of this today.

MCP as infrastructure. MCP server connections are declared as Kubernetes CRDs with connection details, credentials, and access scopes. No hardcoded connection logic in application code. Credentials are resolved from secret stores at runtime and never exposed to agent code.

Durable MCP tool calls. Every MCP tool call runs as a workflow inside the Dapr sidecar. If a process crashes mid-call, execution resumes from the last checkpoint. Long-running tool calls (database migrations, external API sequences, code execution) survive process and node failures automatically.

Middleware hooks. Before and after every tool call, configurable workflow hooks enable per-tool authorization, audit logging, rate limiting, input validation, and cost controls, without modifying agent code. These are workflows themselves, composable and independently deployable, and they are where you plug in OPA or any other policy engine to evaluate signed history before a tool actually runs.

Declarative Workflow Access Policy. A Kubernetes CRD that controls which app identities can start specific workflows and activities on a target application. Uses SPIFFE/mTLS-based identity for caller verification. Supports glob patterns, per-caller rules, and namespace-wide deny-all policies. When a policy exists, everything not explicitly allowed is denied.

Signed history propagation. Workflows opt-in to sending their full execution history to child workflows and activities. Every identity that touches a workflow signs the accumulated history with its identity certificate. Downstream services verify the entire chain before acting, and policy engines can reason over it.

Human-in-the-loop with proof. Agents can pause for human approval via durable workflow events. When a downstream tool receives the signed history, it can cryptographically verify that approval actually occurred, not just that a log says it did.

Consider an agent processing a customer refund request above a defined threshold. Rather than executing immediately or failing, the workflow pauses and emits an approval event:

Refund agent reaches $10,000 threshold

��

▼

Workflow suspends — emits approval request

— sends Slack message to payments-approvals channel

— records pending approval in signed history

— sets 30-minute timeout

│

Human reviews in Slack

│

├── Approves → workflow resumes

│ approval step signed and appended to history

│

└── Denies / timeout → workflow terminates

denial recorded in signed history

│

▼

Refund tool receives call

— verifies signed history contains human_approval step

— confirms approval timestamp within policy window

— confirms approving identity is authorized approver

— executes only if all checks pass

The approval is not a log entry added after the fact. It is a signed step in the execution history that the tool server verifies before it acts. If the approval step is absent, expired, or signed by an unrecognized identity, the tool call is refused, not audited after the damage is done.

The Question Enterprises Should Be Asking

The question is not "do we need an MCP gateway?" You probably do and these should certainly form part of your ingress connection management. MCP is the protocol, and you need infrastructure to manage connections to MCP servers at scale, do routing, and some rate limiting.

The question is: does your MCP infrastructure give you identity, authorization, and proof?

Can your AI platform tell you exactly which agent made a call, not which credential was used, but which cryptographically verified identity? Can it enforce zero-trust, deny-by-default policies on what each agent is allowed to do, managed by your platform team and enforced regardless of what the LLM decides? Can it produce a tamper-evident, signed chain of custody that proves every step in every agent workflow, verifiable in real time by downstream services before they act?

If the answer is no, you don't have a security layer for AI agents. You have a router.

And routers are not enough.

Ready to Go to Production?

Add durable execution to your AI agents in minutes. Start free, no credit card required.